In my previous post, I described about vShield Endpoint. In this post, I will talk about the only real product which is actually using and design with this concept. Trend Micro Deep Security 7.5.

Before I started to roll out details, I would like to thank Trend Micro Australia’s help to give me support when I stuck. Thanks guys.

What can Trend Micro Deep Security 7.5 do?

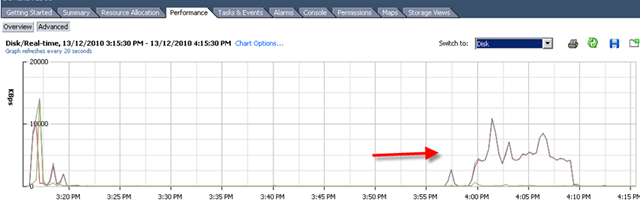

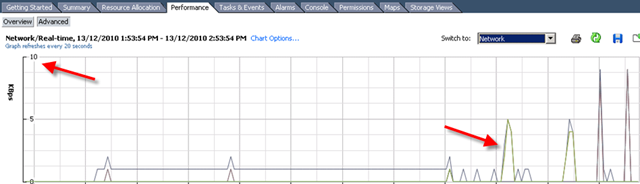

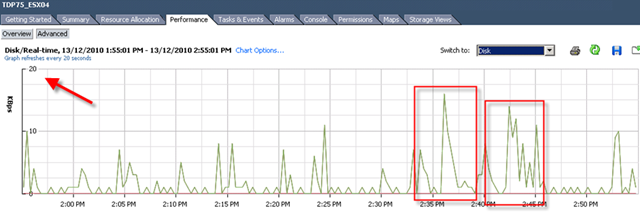

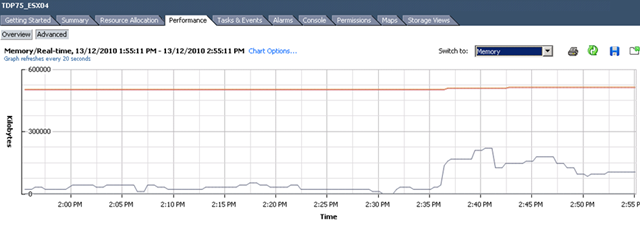

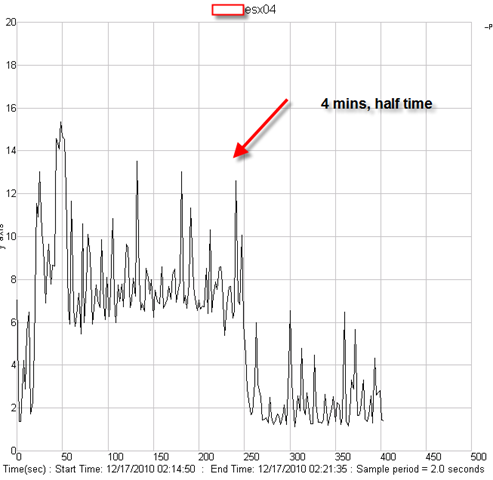

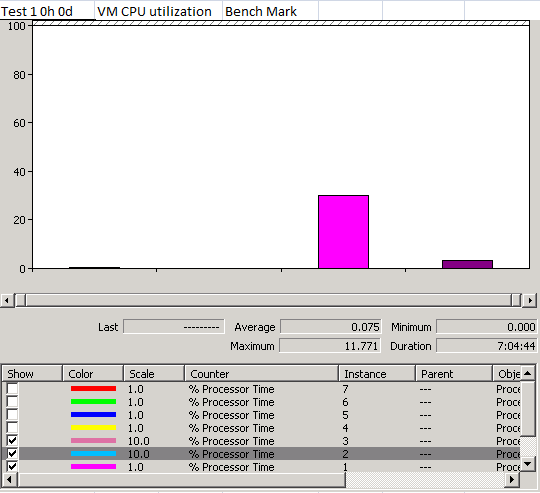

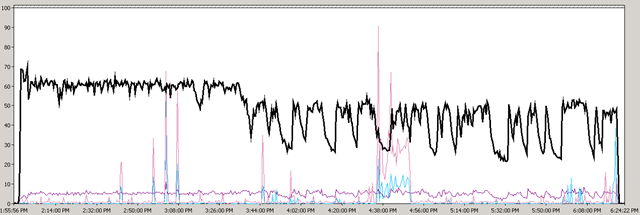

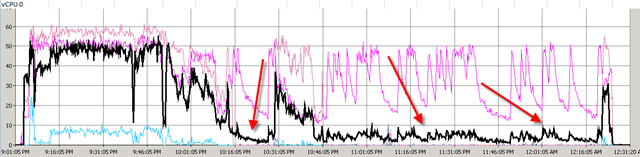

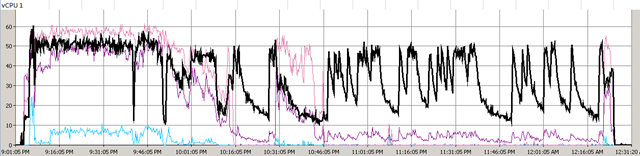

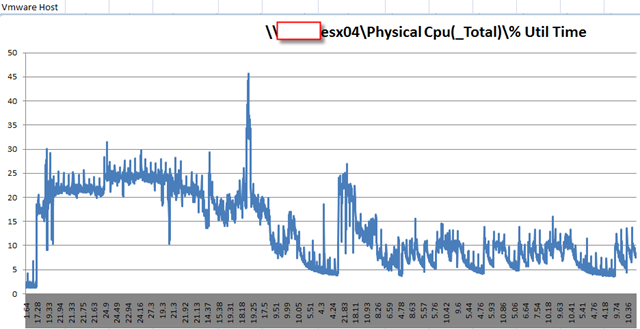

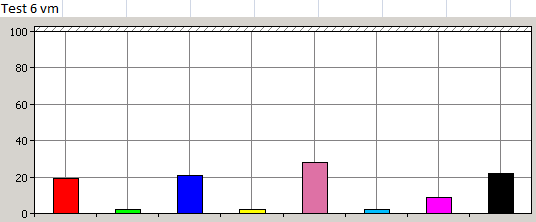

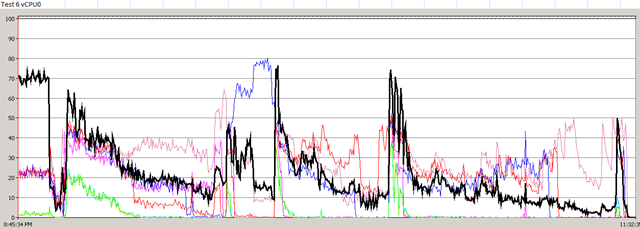

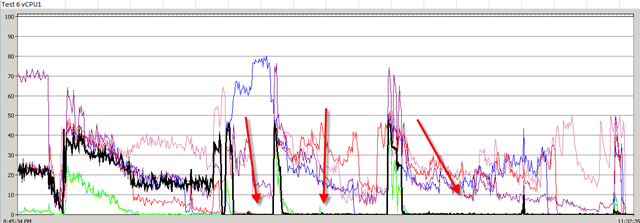

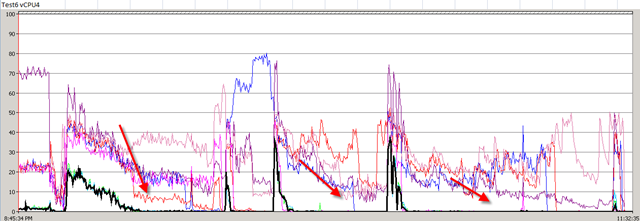

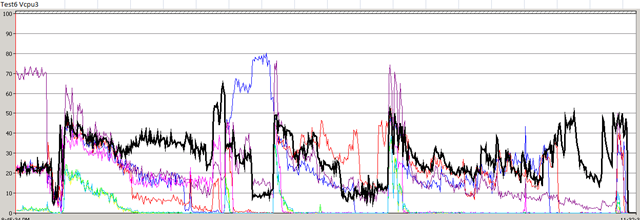

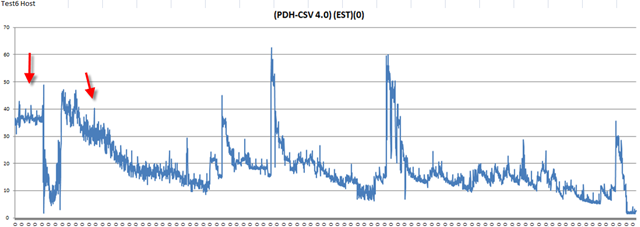

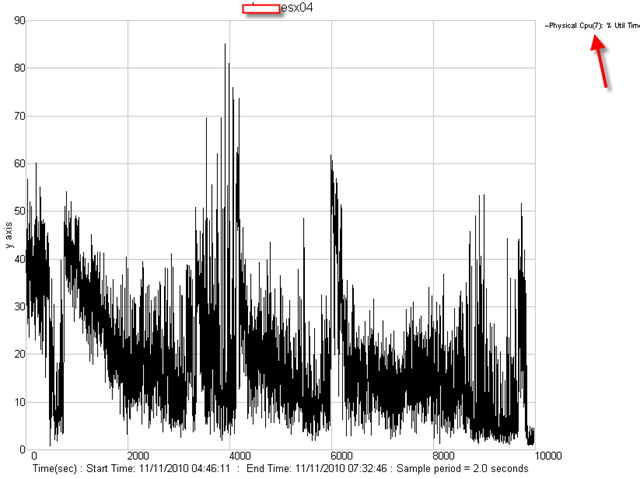

First time I saw this product is on the Vmware seminar. When Trend Micro representative standing on the stage and demonstrate how Deep Security can use only 20% of resource to scan in the virtualization environment. That was mind blowing because imaging VDI and VMs are calling for schedule scan at same time. How much pressure it will cost to ESX Host? This product is only working with vSphere 4.1. It’s using vShield Endpoint and must use vShield point to do it’s job. Well, at least, that’s what Trend Micro claimed. So is this true? Please continue to read.

Note: DS 7.5 is actually merely designed for VM environment. It means it’s not a complete solution at this stage. If you want to protect your physical boxes or workstation, you better still use OfficeScan product.

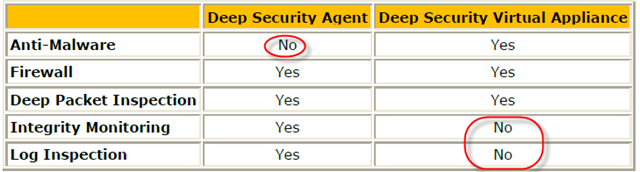

Deep Security provides comprehensive protection, including:

- Anti-Malware (detect&clean virus)

- Intrusion Detection and Prevention (IDS/IPS) and Firewall (malicious attack pattern protection)

- Web Application Protection (malicious attack pattern protection)

- Application Control (malicious attack pattern protection)

- Integrity Monitoring (Registry & file modification trace)

- Log Inspection (inspect logs and event on vm)

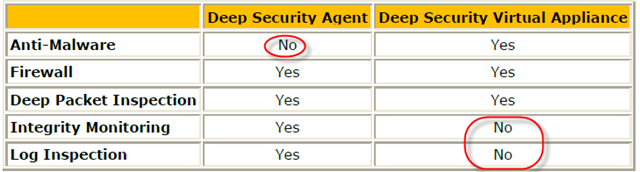

The interesting about DS 7.5 and vShield Endpoint is that none of this product can provide complete solution for end users. Each of them play a certain roles in the system. So the result is actually combination of both software.

Let’s take a look with clear table.

Note:

My suggestion for installing is to install both vShield Endpoint Agent and DS Agent on your VMs. That’s the only way you can protect your VMs.

Components of Deep Security 7.5

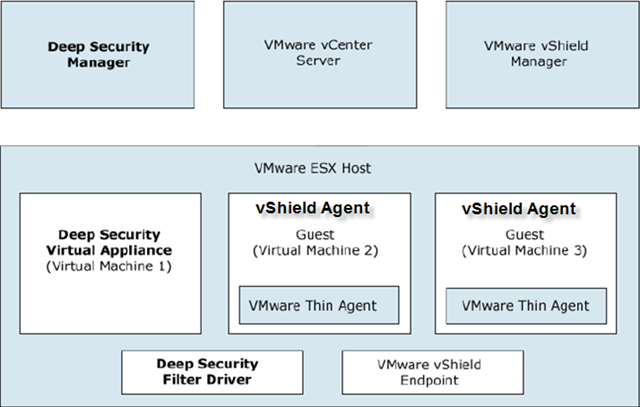

Deep Security consists of the following set of components that work together to provide protection:

Deep Security Manager, the centralized management component which administrators use to configure security policy and deploy protection to enforcement components: Deep Security Virtual Appliance and Deep Security Agent. (You need to install it on one of windows server)

Deep Security Virtual Appliance is a security virtual machine built for VMware vSphere environments, that provides Anti-Malware, IDS/IPS, Firewall , Web Application Protection and Application Control protection. (It will be pushed from DS manager to each ESX)

Deep Security Agent is a security agent deployed directly on a computer which can provide IDS/IPS, Firewall, Web Application Protection, Application Control, Integrity Monitoring and Log Inspection protection. (It need to be installed on the protected VMs)

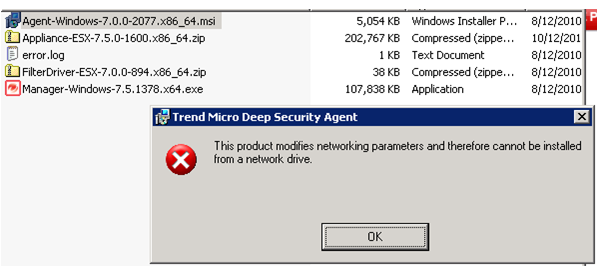

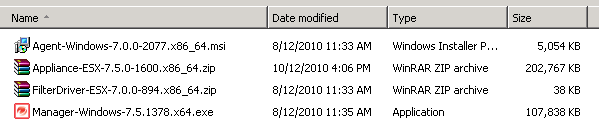

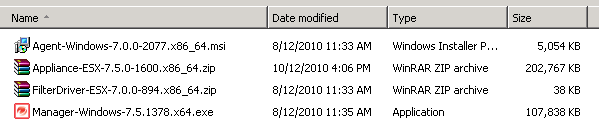

As matter of fact, you need to download following files from Trend Micro website. Don’t forget to download filter-driver which will be pushed from DS Manager to each ESX host.

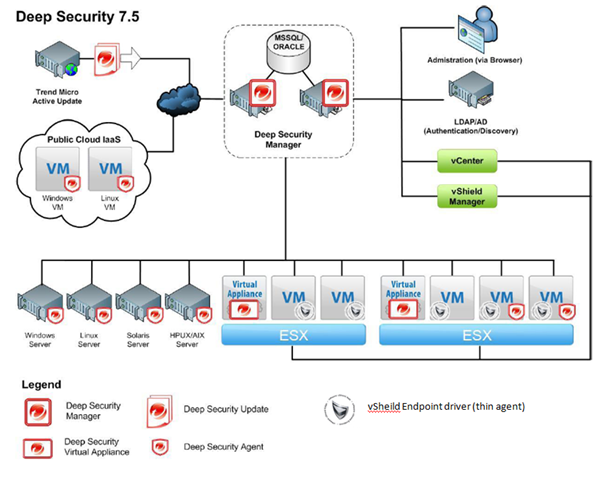

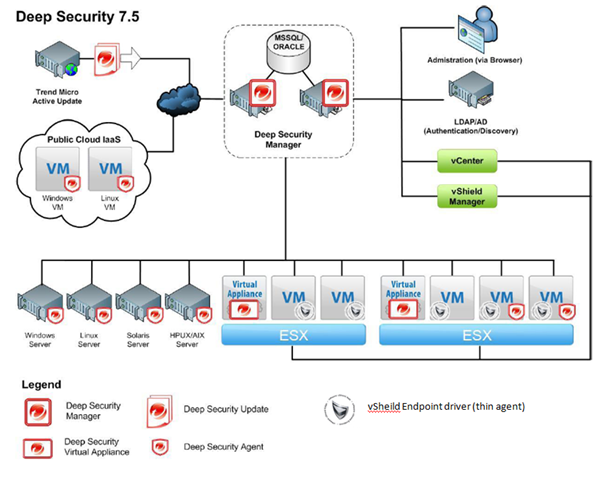

Architecture of Deep Security 7.5

Let’s take a look.

There should be only have one DS manager unless you want to have redundancy.

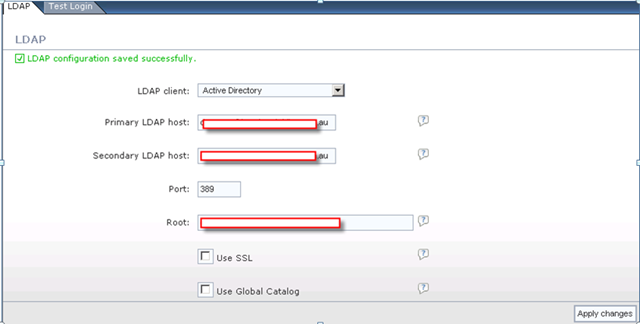

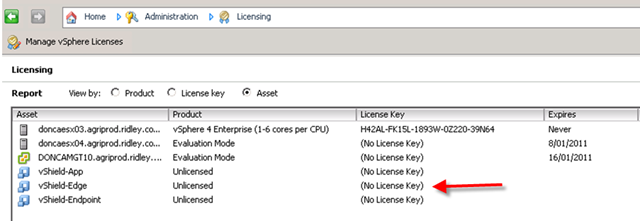

ESX Host must be installed with vShield Endpoint.

Each ESX has it’s own Virtual appliance.

Each VM should have both vShield Endpoint and DS Agent installed.

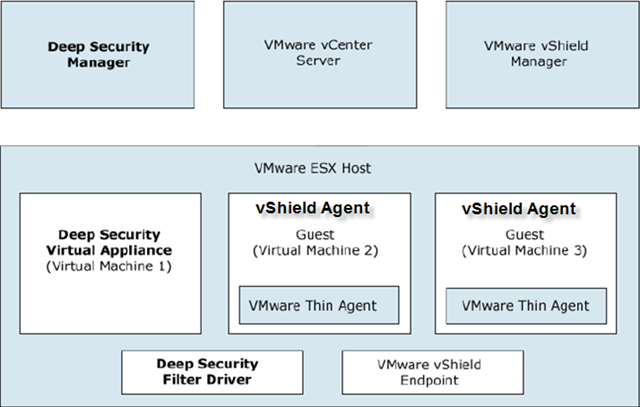

How does Deep Security 7.5 work?

For malware and virus check:

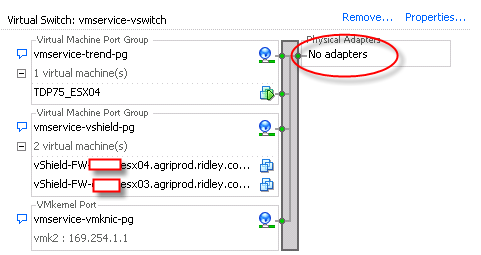

DS is using vShield Endpoint to monitor protected VM memory. The vSheild Endpoint Agent (or AKA vShield Endpoint thin driver) will open a special channel to allow DS virtual appliance to scan it’s memory via special vSwitch which is running on ESX kernel driver layer.

Since VMware needs to make sure the isolation of VMs traffic and memory, hard disk and no other application should breach this protection, vShield Endpoint is a back door opened by VMware to let third party to scan VM content legally and logically.

For registry keys and logs and other components of VM, we have to relay on DS Agent because vShield Endpoint can allow do so much. That’s why the solution must combine both vShield Endpint and DS agent.

Install Deep Security 7.5

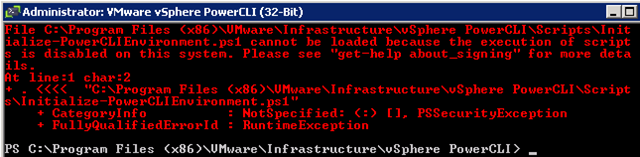

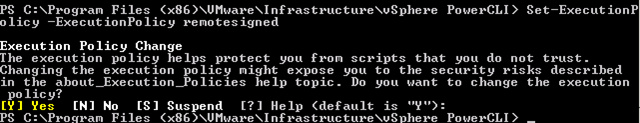

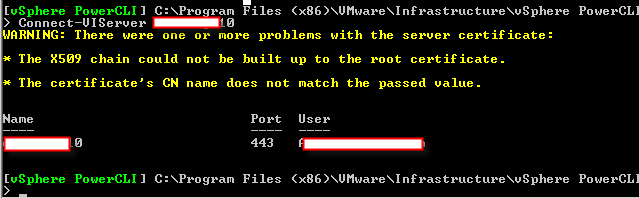

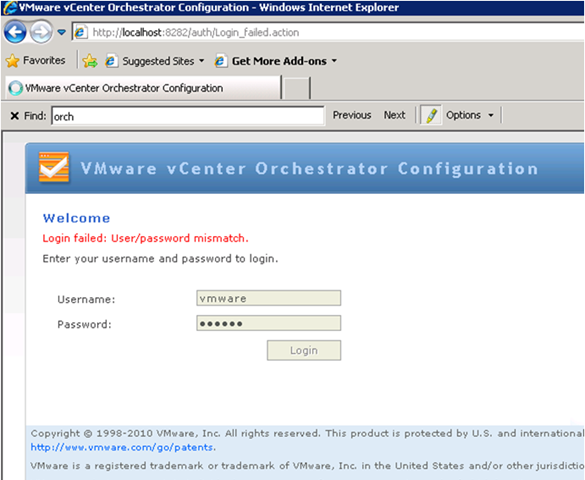

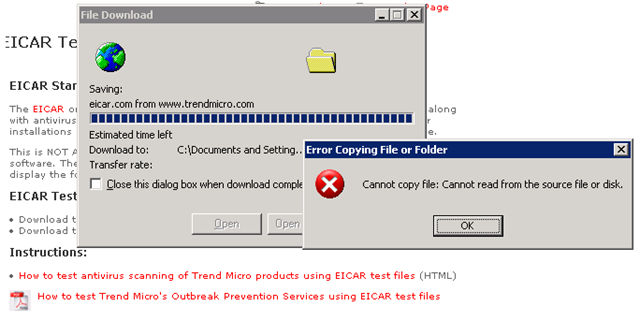

I did encounter some interesting errors during the installation.

But let’s sort out the steps of installation first.

- Install Endpoint on your VMware ESXs.

- hostInstall DS manager on one of your windows box.

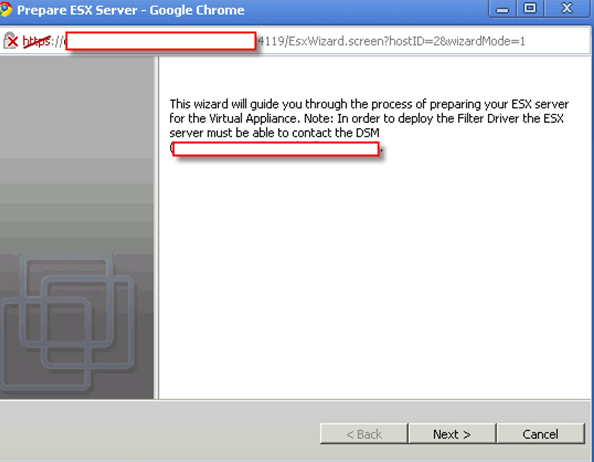

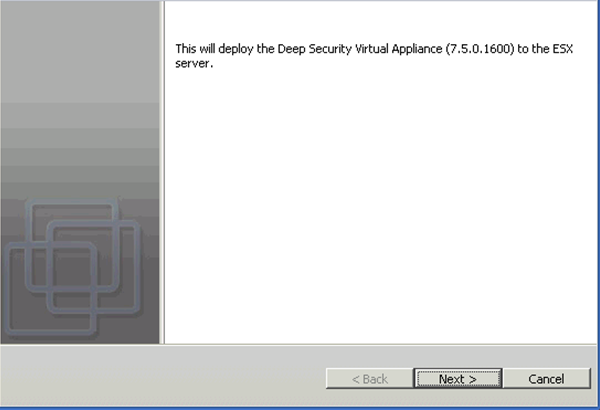

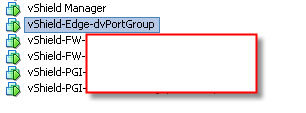

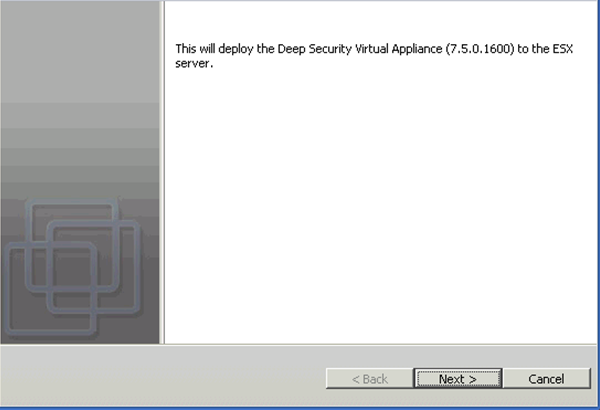

- Push Virtual Appliance, filter driver to each ESX host. It will add a appliance into vShield protected vSwitch. Filter driver will be loaded in the ESX kernel.

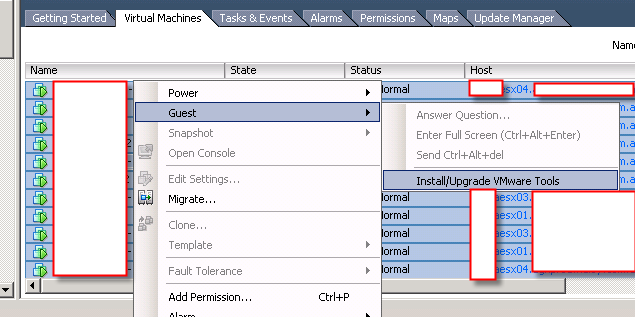

- Install DS agent, vShield Point Agent on VMs you want to protect.

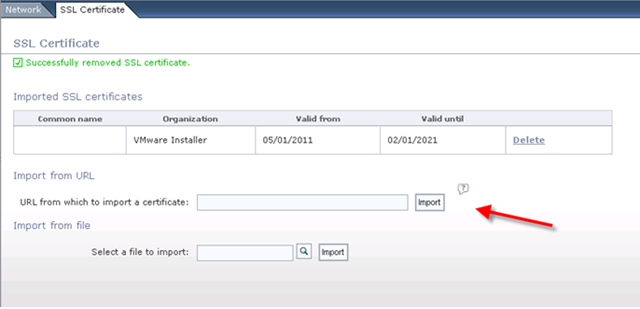

Install Endpoint on your VMware ESXs.

Please click here to see how to do it.

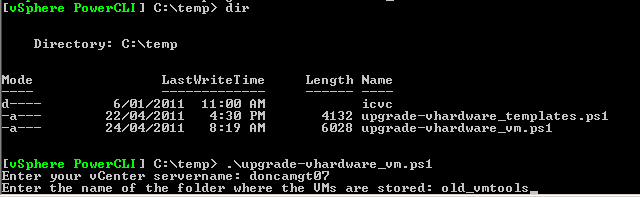

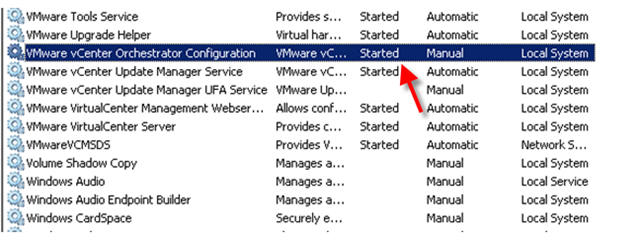

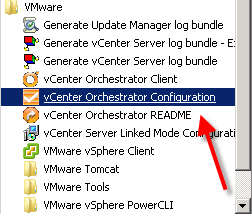

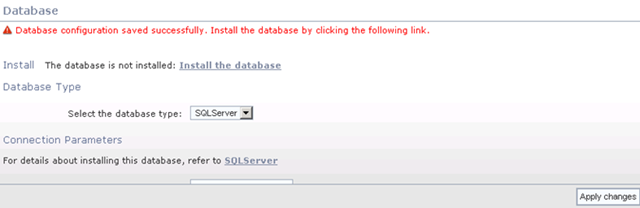

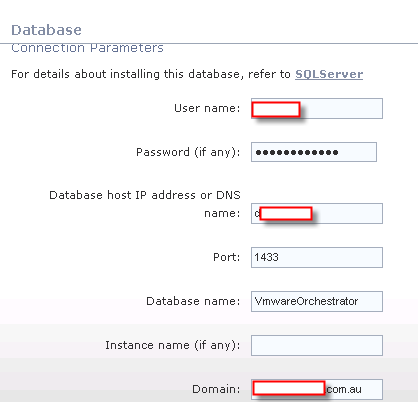

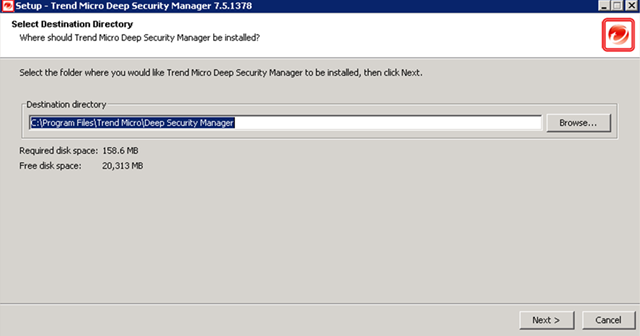

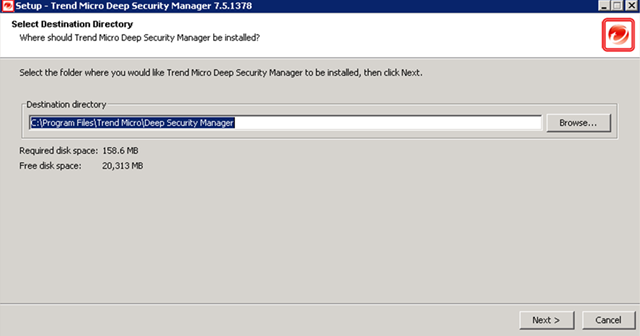

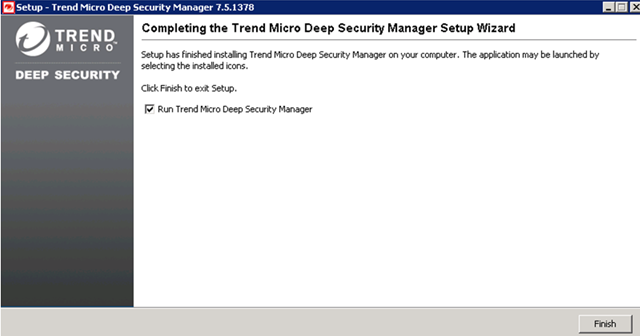

Install DS manager on one of your windows box

Those are easy step. I believe any admin can do his job well.

Let’s me skip some easy parts.

skip,skip

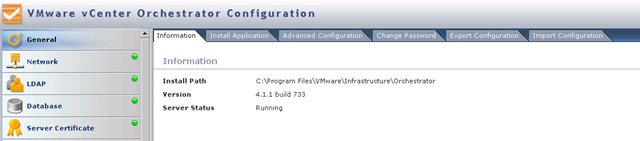

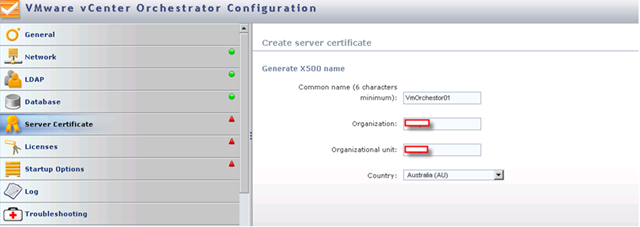

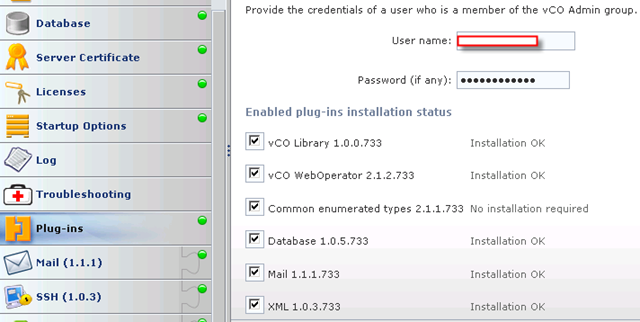

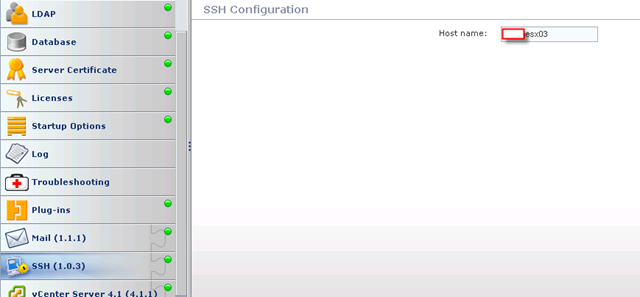

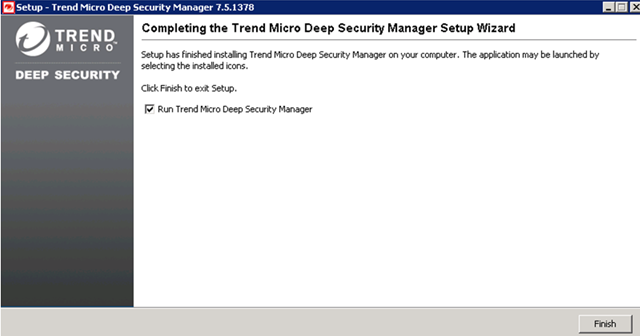

Once you finish installation of DS Manager. You need to configure the DS Manager.

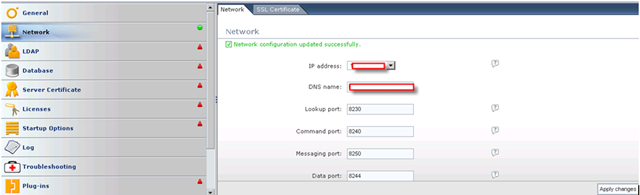

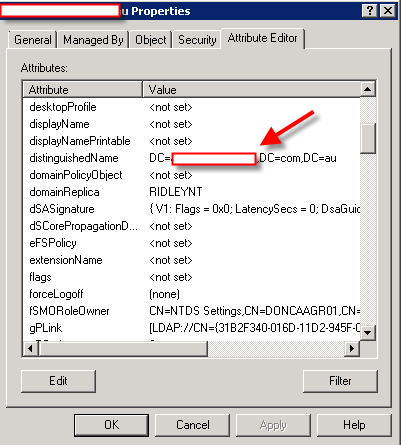

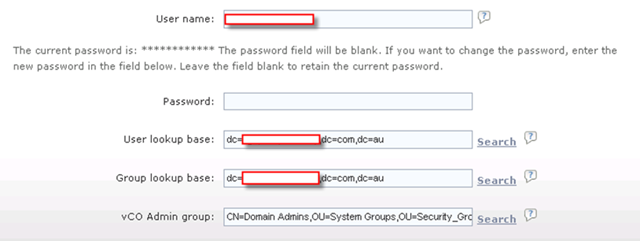

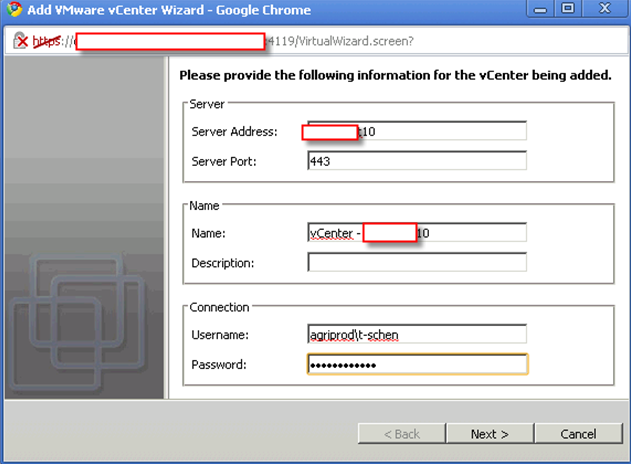

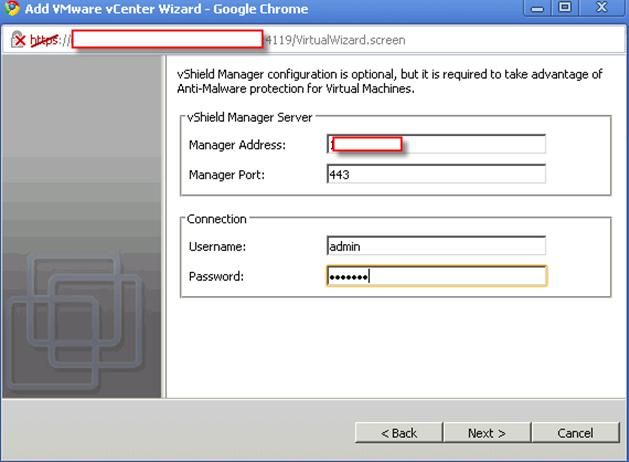

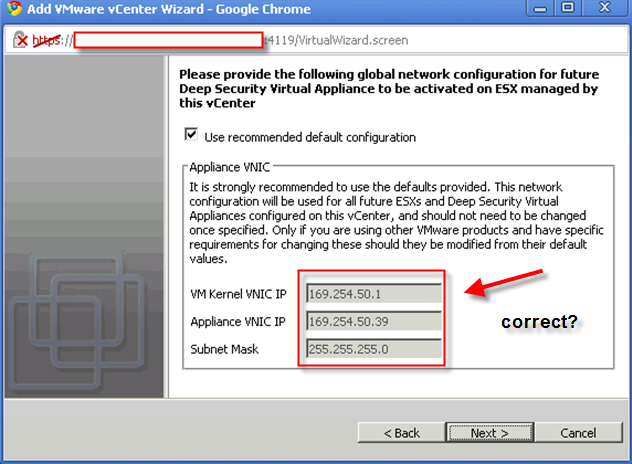

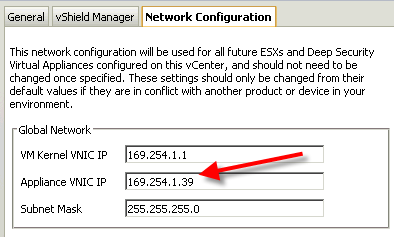

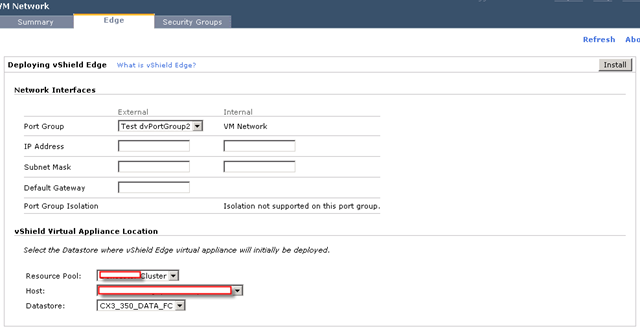

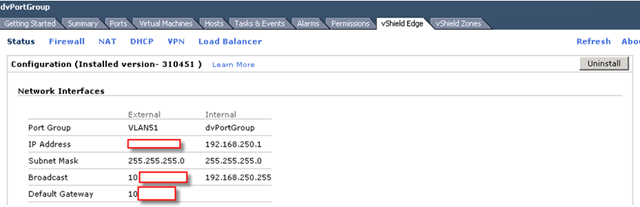

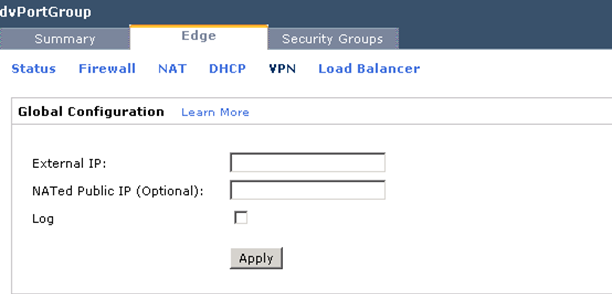

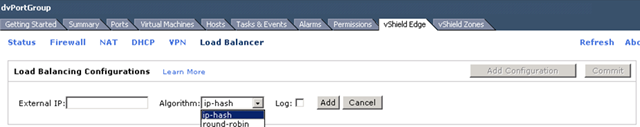

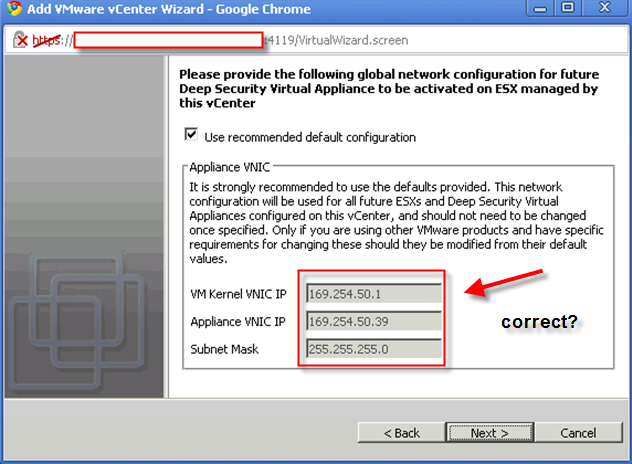

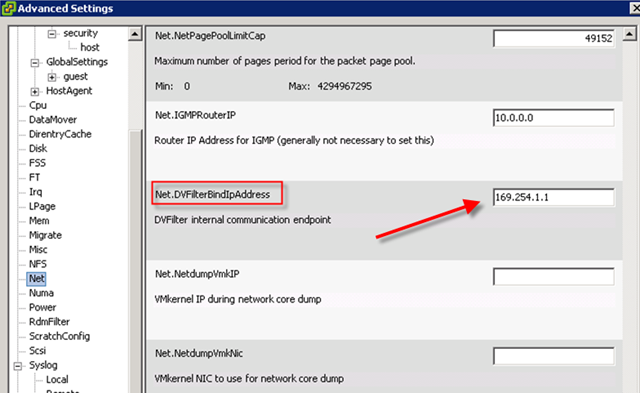

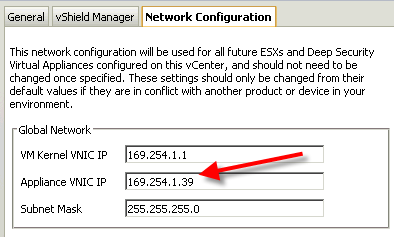

This is really tricky part. What are those IP for?

The answer is those IP must not be occupied and it must be in the same subnet as rest of your vShield components are.

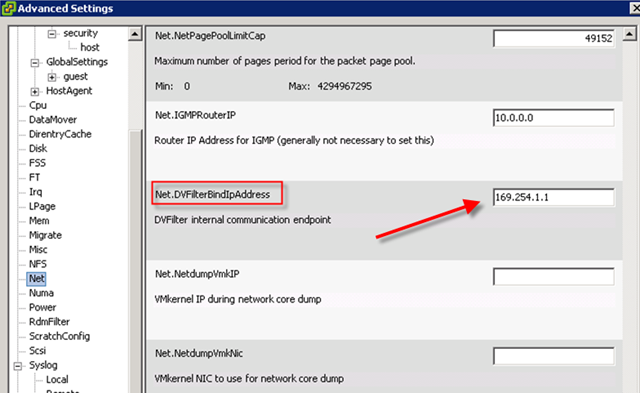

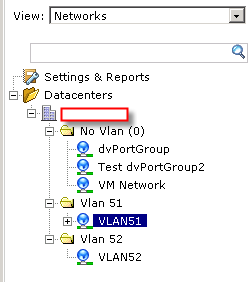

Check out this diagram and find out your own vShield subnet.

On your ESX host(which has Endpoint installed already), you should find this.

so what’s your vSheild Subnet?

The rest is easy part. skip,skip

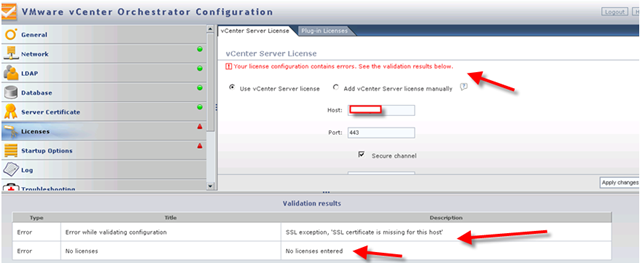

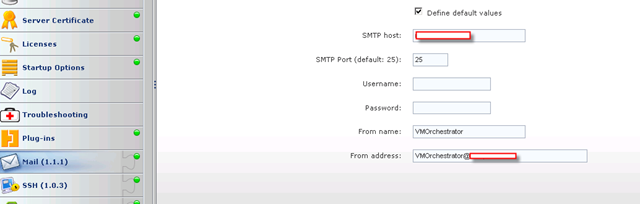

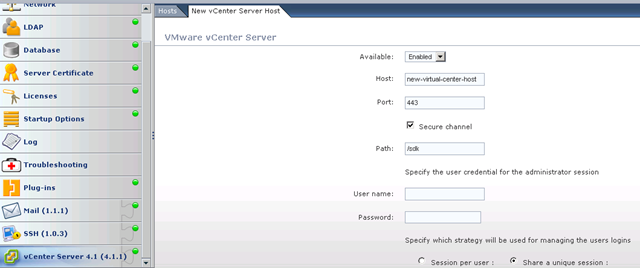

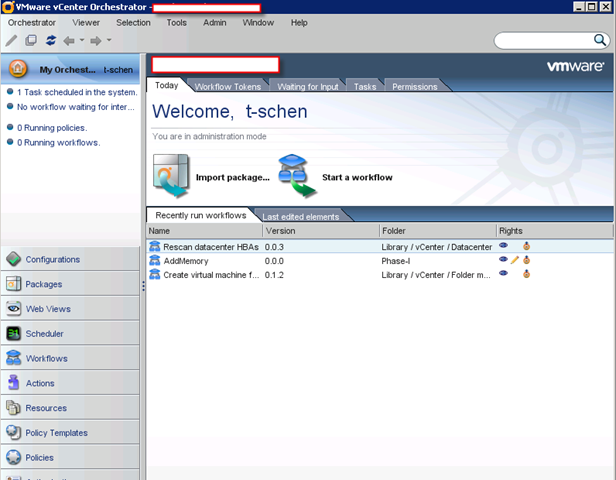

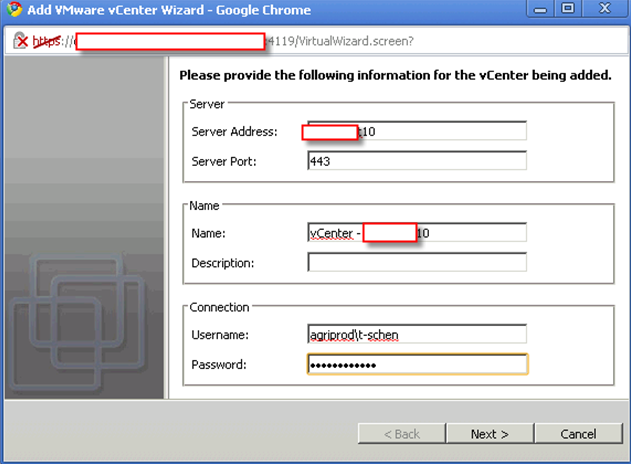

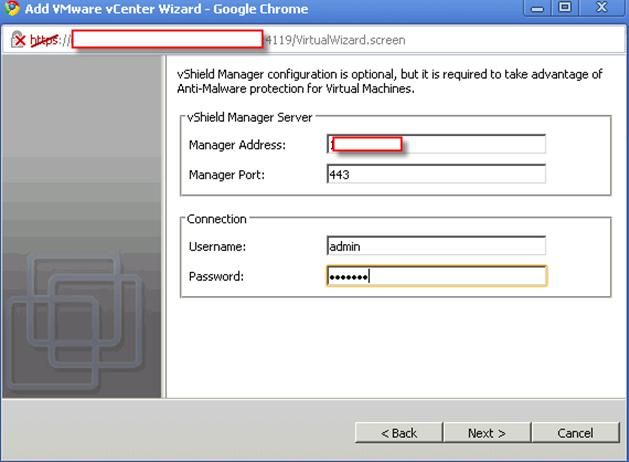

Basic Configure DS Manager

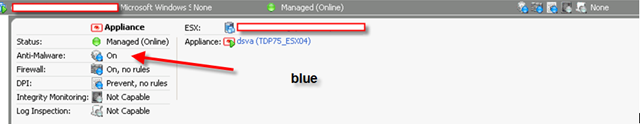

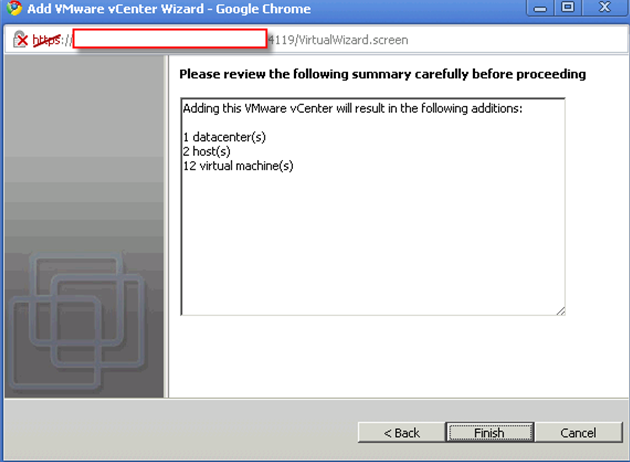

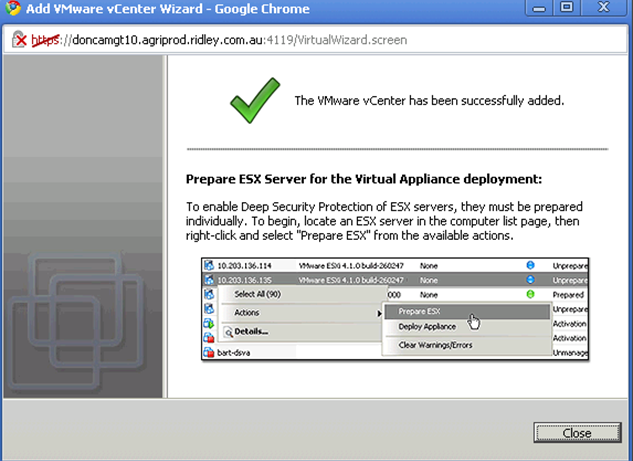

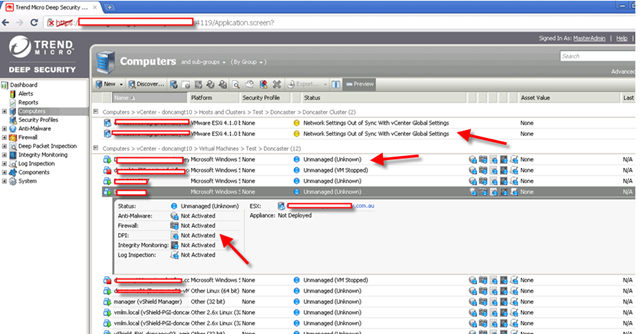

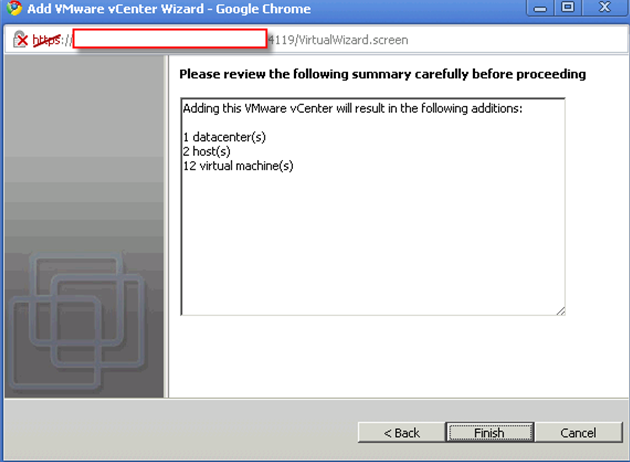

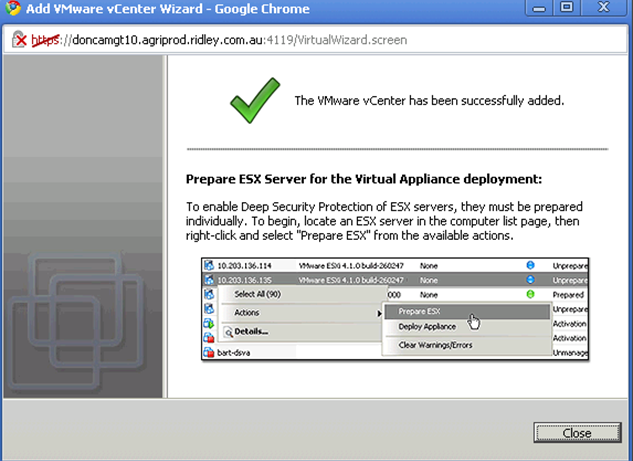

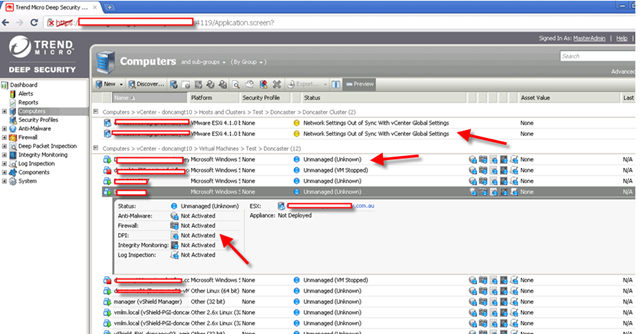

By now, you have already connect to vCenter and vShield Manager. You suppose to see something like that.

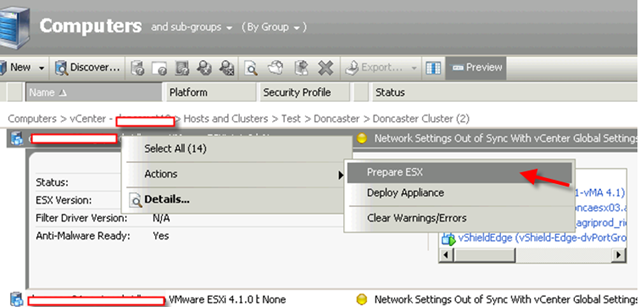

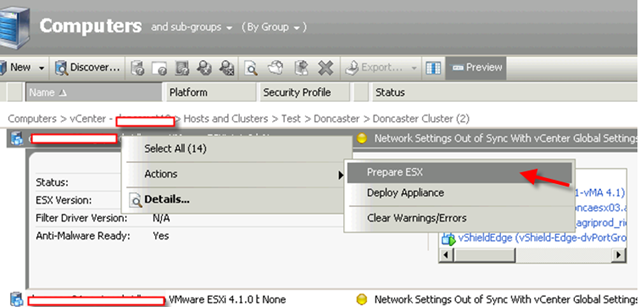

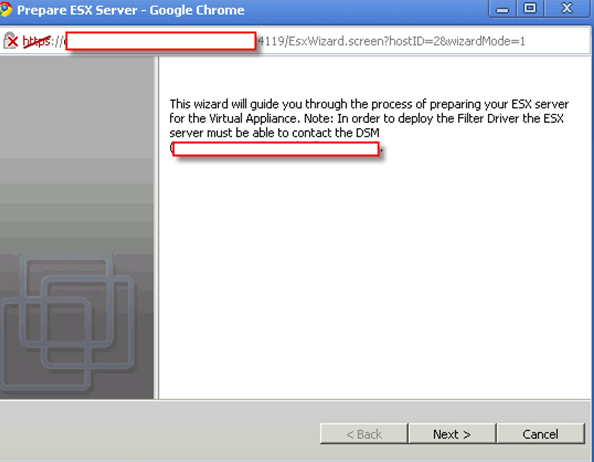

Notice nothing is actually managed and ready. That’s because you need to “Prepare ESX”.

Notice:

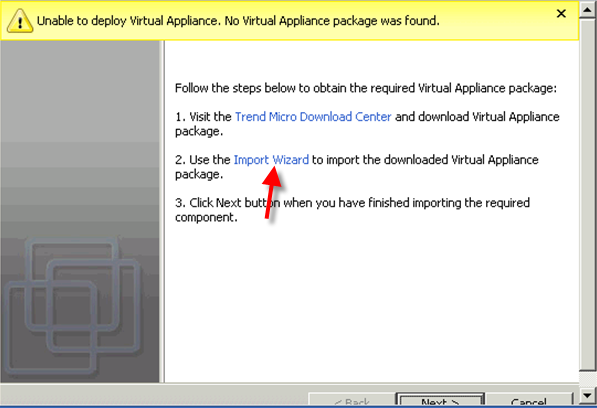

Before you “Prepare ESX”, you need to make sure vShield Endpoint has already installed and you have already download all DS components.

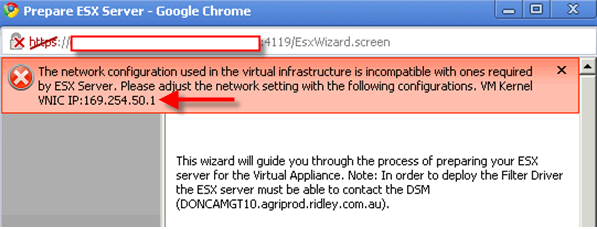

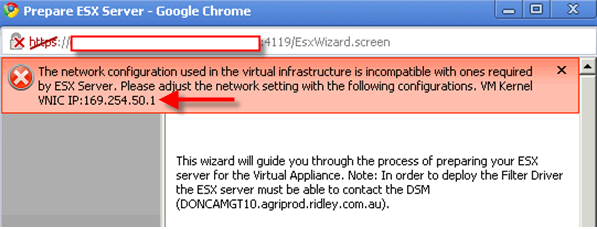

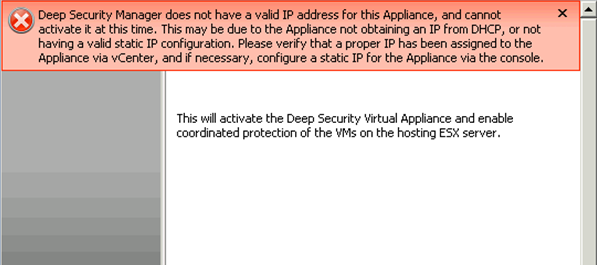

If you didn’t setup your vShield subnet correct, you will run into this error.

In my case, I just need to right click vCenter->Properties-> Network Configuration

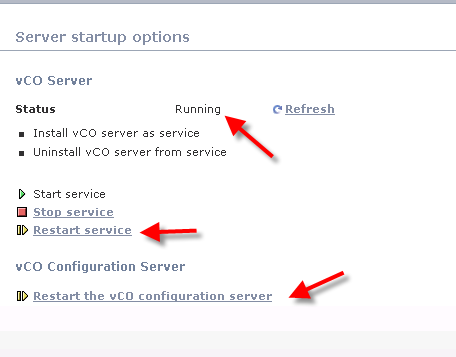

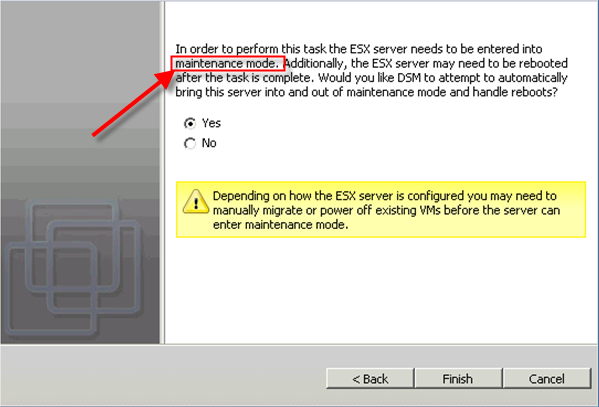

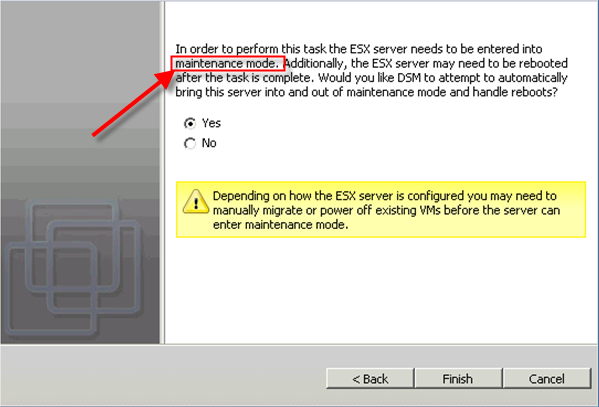

please be aware you need to put your ESX into maintenance mode and restart it in terms of pushing DS virtual appliance and filter driver.

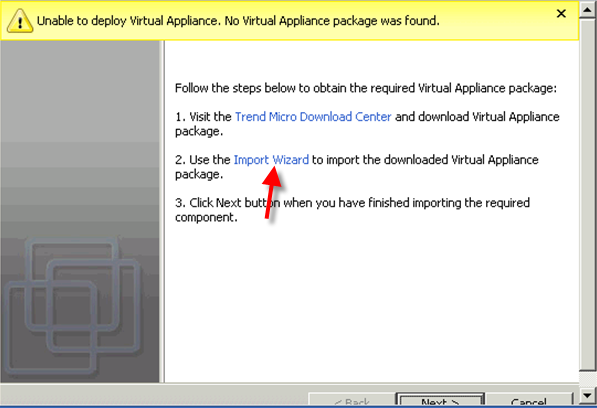

You need to import your downloaded files into DS Manager. If you didn’t import before, you will have chance to import again or download.

As usually, I skip some steps.

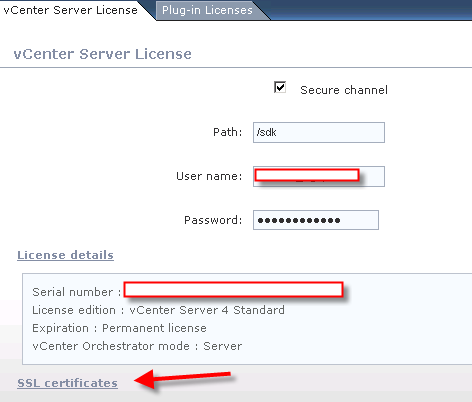

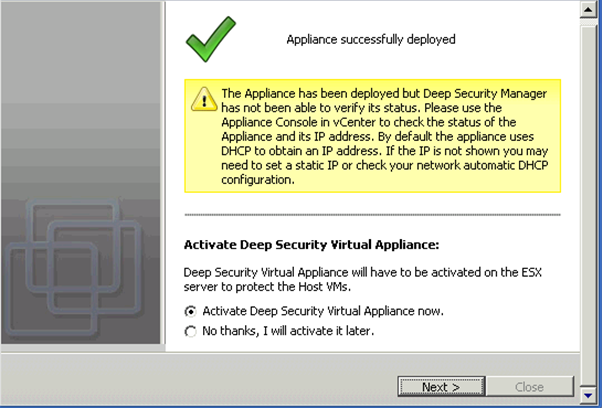

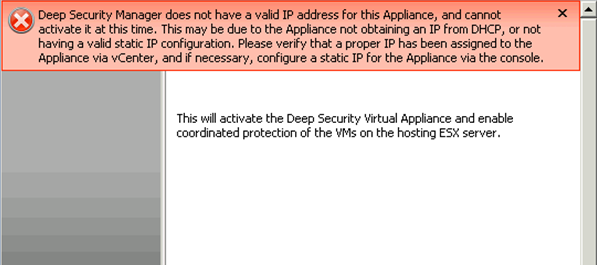

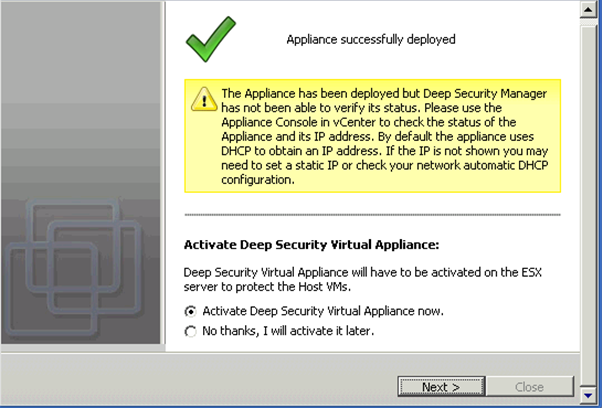

Here is another tricky. Because my ESX has different default IP as DS default. so once the DS Manager deploy the virtual appliance to ESX, the appliance only has default DHCP IP which is wrong in my case also the virtual network is also wrong. I encounter this problem.

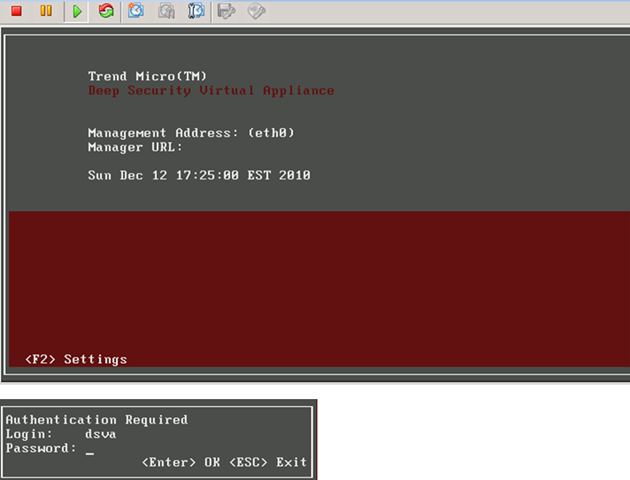

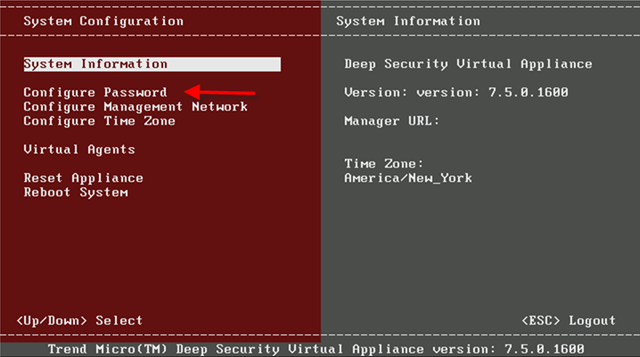

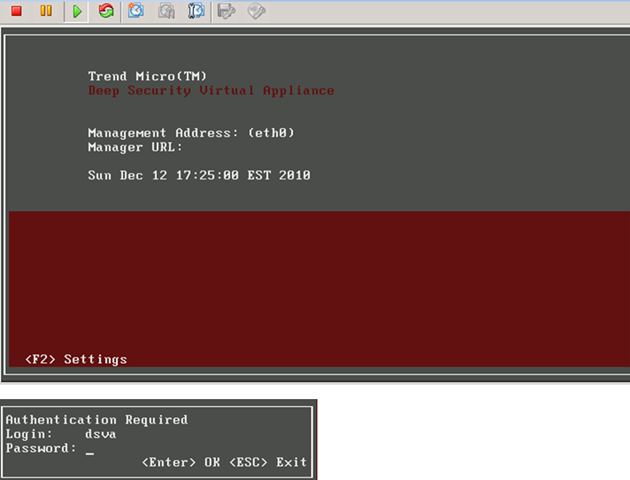

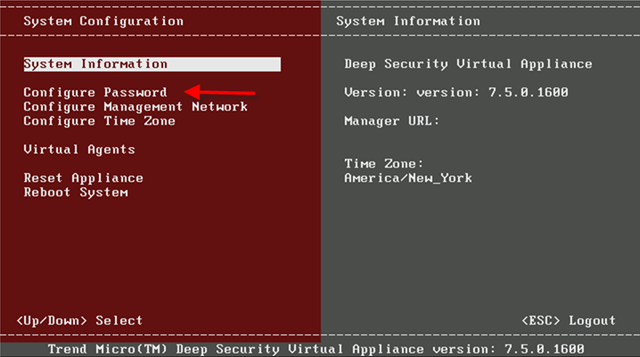

All what you need to do is to jump on ESX and virtual appliance console to change IP of that appliance. The default username and password is dsva.

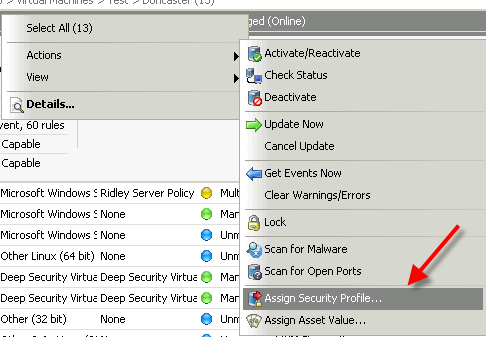

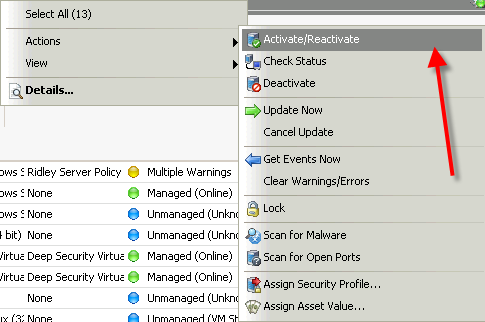

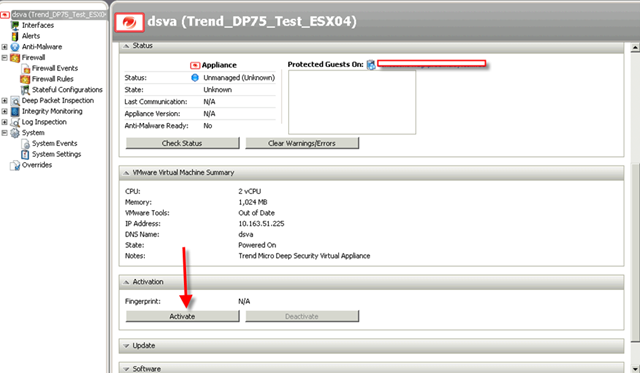

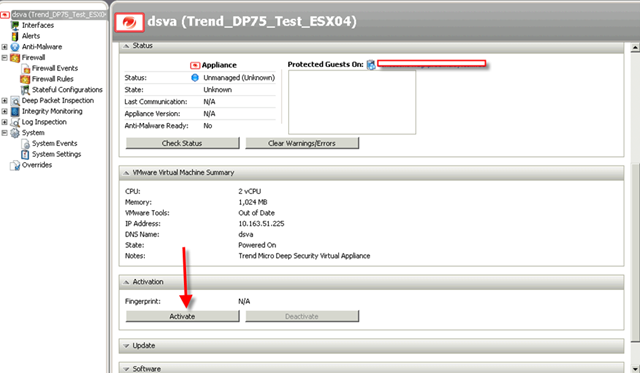

Once you changed the IP, reboot this VM. Go back to DS Manager and double click dsva object to activate it.

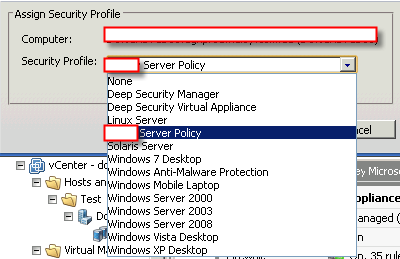

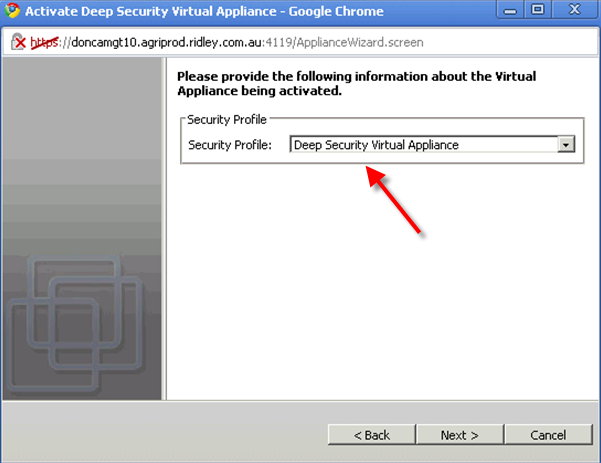

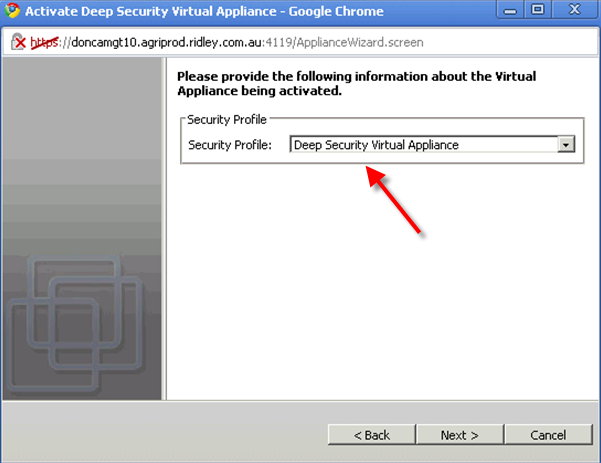

Make sure the security profile is loaded. That’s very important!!

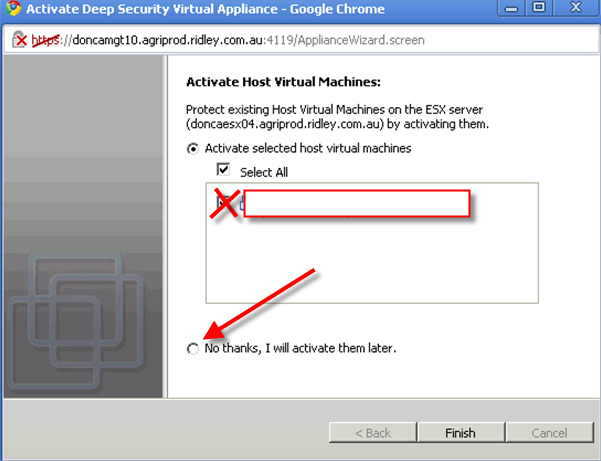

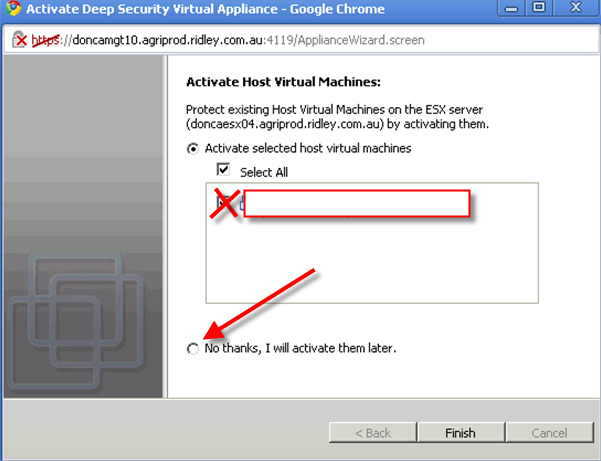

System will automatically offer you some VMs to protect. You can choose “no” at this stage. Why? because you haven’t installed vShield Endpoint agent and DS agent on your VMs yet.

By now, the installation steps have finished here.

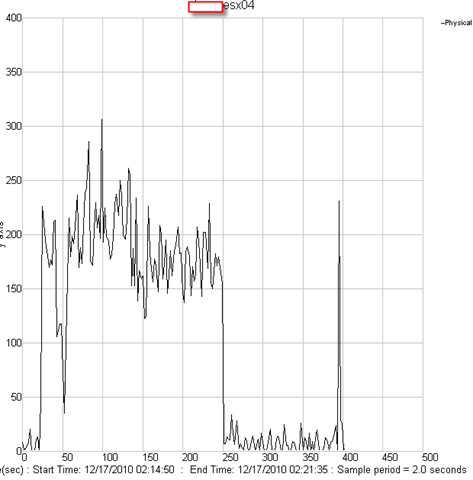

In my next post, I will talk about how to configure Trend Micro Deep Security 7.5 and performance result comparing with OfficeScan and virus testing.

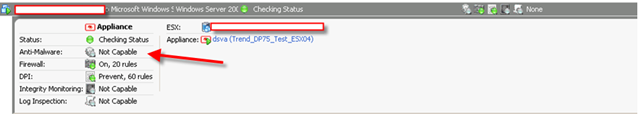

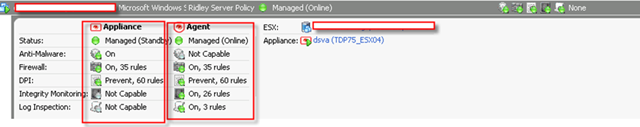

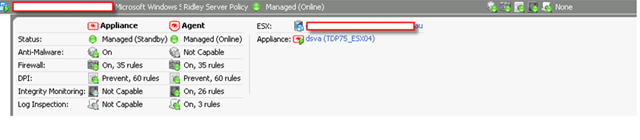

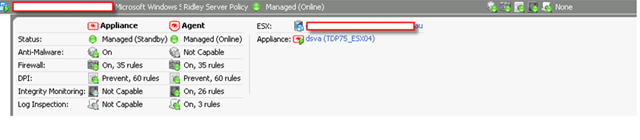

Let me show you a picture what a DS manager look like when a VM is fully protected to finish this post.

Reference:

Trend Micro Deep security installation guide

Trend Micro Deep security User guide